Please wait...

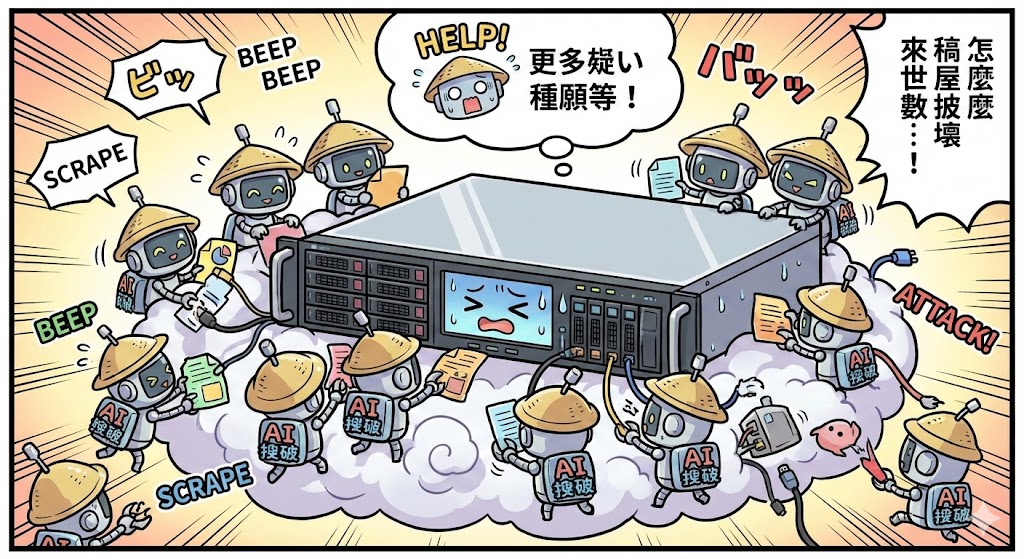

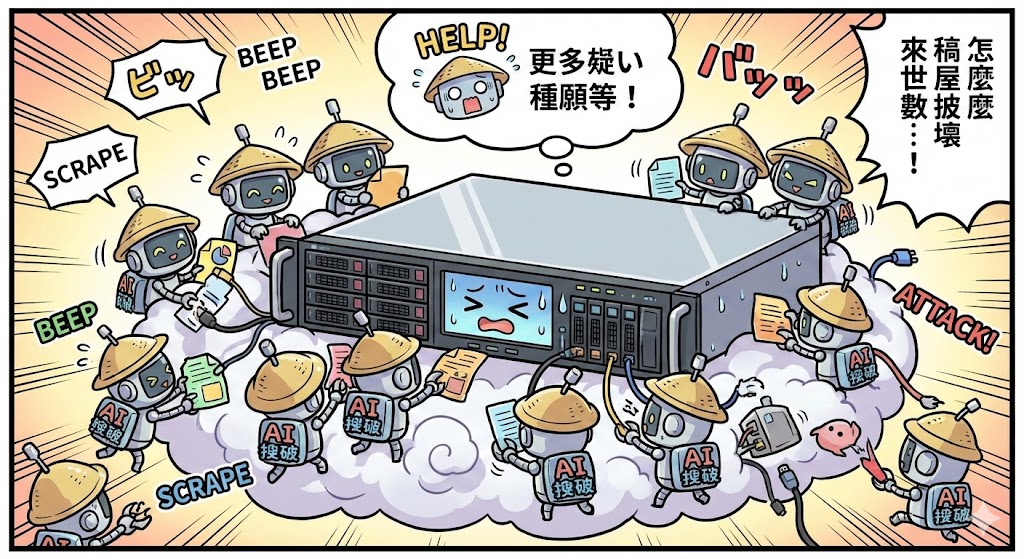

Due to continuous high load from AI scraper bots, there are some ratelimit delays and a button to press

Ready!

The rate limit delay is complete. Press the button to continue.

Verifying...

Please wait while we verify your request.

Due to continuous high load from AI scraper bots, there are some ratelimit delays and a button to press

The rate limit delay is complete. Press the button to continue.

Please wait while we verify your request.